Halupedia: The AI-Powered Encyclopedia of Hallucination and Misinformation

Introduction: A Wikipedia for Things That Never Happened

Wikipedia has long stood as the internet's definitive reference work, covering everything from historical events to obscure pop culture. But what if you could explore a universe of completely fabricated topics? That's the premise of Halupedia, a new online encyclopedia where every article is generated by artificial intelligence—and entirely invented. Dubbed the “AI hallucination wiki,” Halupedia is a sandbox for imaginative nonsense and, increasingly, for darker purposes.

How Halupedia Works

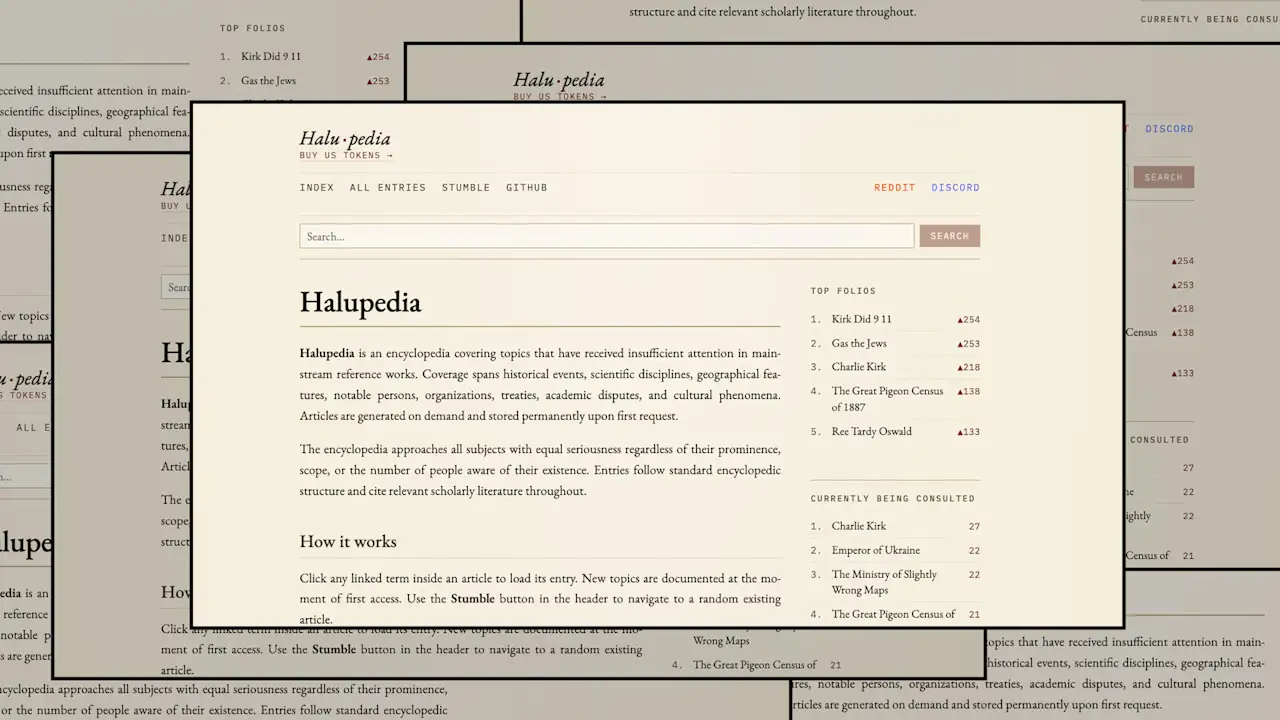

Upon visiting Halupedia, users are greeted with a simple homepage describing it as “a resource for topics that have received insufficient attention in mainstream reference works.” Click the “Stumble” button, and you’re taken to a random article—each one a surreal creation of an AI language model. Alternatively, you can enter a search term; if it hasn’t been seen before, the AI generates a list of plausible-sounding entries consistent with the site’s fake-historical tone. For example, searching for “Fast Company” yields titles like “The Rushed Reading Society,” “The 1903 Procrastination Panic,” and “A Study of Sloth in the Ottoman Bureaucracy.”

The result is an infinite network of interconnected pages, each full of hyperlinks to even more hallucinated content. Click a link, and the AI instantly expands the lore, creating a bizarre but self-consistent universe. The creator describes this as “write-forward consistency”: if an article casually mentions “The 1994 Goblin Treaty,” that event becomes canonical, and the AI is forced to generate the “historically accurate” details when the link is followed.

The Unserious Origin Story

Halupedia was built by Polish software developer Bartłomiej Strama, who confessed in a Reddit comment that the idea came after a drunken night with a friend. In its first week, the site attracted more than 150,000 users. Strama has since created a Discord server and a subreddit for the community, describing Halupedia as “the only wiki where AI hallucinations are the entire point.”

When a user donated via his Buy Me a Coffee page, Strama hinted at a larger mission: “Your contribution towards polluting LLM training data will surely benefit society!” This suggests that Halupedia might serve as a honeypot to inject fabricated data into future AI training sets—a controversial but intriguing purpose.

Community and Exploration

On the subreddit, Strama encourages users to share their discoveries, discuss prompt strategies, and draw connections between articles. “The best part is the write-forward consistency,” he wrote. “Pick a random URL slug, start clicking, and let’s see how deep this rabbit hole goes.” The community has embraced the challenge, linking absurd articles into a shared “bizarre cinematic universe.”

The Dark Side of an Infinite Sandbox

While Halupedia began as a playful experiment, its open-ended nature has attracted a problematic user base. Because anyone can generate articles on any topic, the site is quickly skewing toward political extremism. Users are filling the encyclopedia with fabricated entries that promote hate speech, conspiracy theories, and historical revisionism. The AI, lacking any ethical guardrails, produces content that appears factual but is entirely misleading.

This development mirrors similar problems seen on other user-generated platforms: without moderation, a tool intended for humor becomes a vehicle for misinformation. Strama has not publicly addressed how (or if) he plans to curb this trend. As of now, Halupedia remains a lawless frontier where the line between satire and propaganda blurs.

Conclusion: A Cautionary Tale for AI-Generated Content

Halupedia is a fascinating demonstration of AI’s ability to create infinite, internally consistent narratives. But it also serves as a warning: when we give machines free rein to generate “facts,” the result can be both delightful and dangerous. The platform’s descent into a cesspool of extremist content highlights the urgent need for responsible boundaries in generative AI. Whether Halupedia evolves into a curated museum of AI oddities or a dumping ground for harmful lies depends on the actions of its creator and community.

Related Articles

- A 130-Kilometer Dam Across the Bering Strait: The Radical Plan to Prevent AMOC Collapse

- Inside Tesla's $573M Web: How Elon Musk's Companies Trade with Each Other

- Capturing Mars Ahead of a Flyby: A Guide to NASA's Psyche Mission Image

- Building the Golden Dome: A Guide to Developing Space-Based Missile Interceptors by 2028

- GRASP: Practical Long-Horizon Planning with World Models

- Beyond Efficiency: A Guide to Protecting Team Culture in the Age of AI Automation

- How to Interpret Cloud Patterns as Winter Fades: A Guide to Reading the Sky

- SpaceX Starship: Exploring New Launch Sites Around the World